Webmaster's job is to protect the website from malicious traffic. One of the practices serving this purpose is the collection and analysis of the website's HTTP access logs.

I have developed Rust library and command-line tools to help discern malicious traffic from actual visitors.

Malicious traffic

Not all website visitors are people interested in its content. Websites receive a stream of content requests coming from web crawler programs called "bots".

Not all bots are welcome to crawl the website. Some are miss-implemented and would not respect rules defined in robots.txt or maximum request rates signalled by 429 responses code. Bad actors can program bots also for malicious purpose. This can include cloning (where someone hosts a copy of the website for phishing or ad revenue) or blocking access to the website with a denial-of-service attack.

This bot traffic can lead to high server resource utilization and, in the end, to slower performance for the visitors.

Autonomous Systems number database

One way I keep an eye on the bot traffic is by use of information from the Autonomous Systems number (ASN) database. This database contains IP network ranges (prefixes) and their assigned ASN along with the name of the operator and country. This data reflects ownership of different blocks of IP address space.

IPtoASN website maintained by Frank Denis publishes recent ASN database files in tab-separated values (TSV) format.

Rust crates

I have written library and command-line tools to perform IP lookups in the ASN database file obtained from IPtoASN.

The asn-db crate is a library that can read and index the TSV ANS database file for fast lookups of networks containing a given IP address. The asn-tools crate is a set of command-line tools to update and query local copy of the index.

Crate: asn-db

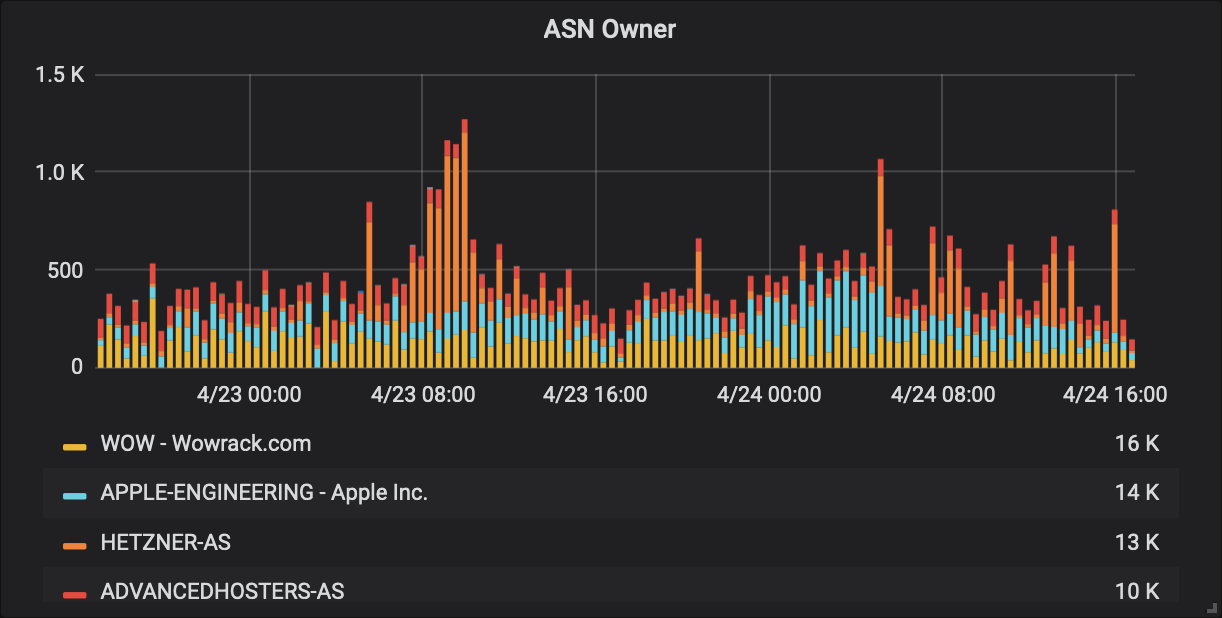

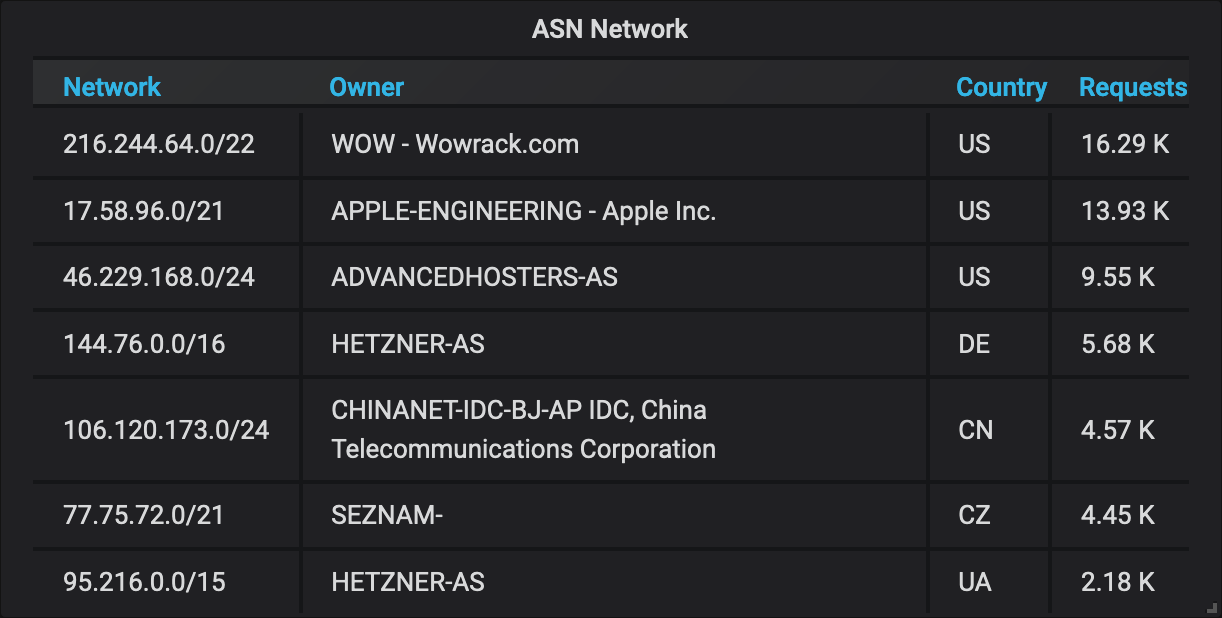

In my work, I use the asn-db library as part of an HTTP access log processing flow. With every request sent to the website, a query with the client's IP address runs against the ASN database to obtain a matching network prefix record. Network prefix and ownership data are then stored with the IP address and other request and response data in the access log database.

Later I can aggregate and visualize access information for each source subnet and network operator. With this information, I can often tell if a particular set of requests is coming from a user network (like mobile or broadband operator) or virtual server hosting company, making them likely to be originating from bots. Having ASN data stored in the HTTP access log database allows me to further drill down on sources and character of the traffic, ensuring any potential website access blocking action based on the client's IP addresses won't affect real visitors of the website and only will address bot traffic.

Crate: asn-tools

The asn-tools crate contains two command-line programs that use the asn-db library.

Run the asn-update program to download the TSV file from the IPtoASN website and index it to a locally stored file.

Then use asn-lookup to load and query the local index for information.

The asn-lookup tool can take tabular data (CSV) from standard input or arguments containing the list of IP addresses and produce resolved ASN database records with matching networks in various formats.

$ asn-lookup 1.1.1.1 8.8.8.8 9.9.9.9

Network Country AS Number Owner Matched IPs

1.1.1.0/24 US 13335 CLOUDFLARENET - Cloudflare, Inc. 1.1.1.1

8.8.8.0/24 US 15169 GOOGLE - Google LLC 8.8.8.8

9.9.9.0/24 US 19281 QUAD9-AS-1 - Quad9 9.9.9.9

Managing bot traffic

After I have identified IP addresses of badly behaving bots, I use the asn-lookup tool to get the list of networks these bots are operating from.

Then I use the list as a base to form an access control list (ACL) that will block access to the website.

This way, I can block the whole ranges of IP addresses that malicious traffic is coming from, keeping my ACLs short and blocking bots even if they keep changing IPs within their networks.

I use this method as the last layer of defence when bot traffic is nuanced and automatic per IP throttling is not sufficient.

I use Varnish HTTP reverse-proxy and cache to front the back-end servers of the website. I manage its configuration with the Puppet configuration management system.

To make my life easier, I have added a puppet output format to the asn-lookup command-line tool, so I can use the output generated by it directly as-is in my configuration manifests.

$ asn-lookup -o puppet 105.0.0.123 54.248.1.1 66.249.64.1

'54.248.0.0/13', # US 16509 AMAZON-02 - Amazon.com, Inc. (54.248.1.1)

'66.249.64.0/20', # US 15169 GOOGLE - Google LLC (66.249.64.1)

'105.0.0.0/15', # ZA 37168 CELL-C (105.0.0.123)

Code and installation

These tools and the library are released under the MIT licence and are free to use. The ASN database is released under Public Domain Dedication on the IPtoASN website maintained by Frank Denis.

For information on installation and detailed usage, please go to the asn-tools source repository and asn-db source repository (also available on my GitHub profile).